Handling nonlinearly separable classesīy construction, SoftMax regression is a linear classifier. As such, numerous variants have been proposed over the years to overcome some of its limitations. logistic regression), SoftMax regression is a fairly flexible framework for classification tasks. Doing so enables us to get rid of this over-parameterization issue.

Without loss of generality, we can thus assume for instance that the weight vector w associated with the last class is equal to zero. If one does not account for this redundancy, our minimization problem will admit an infinite number of equally likely optimal solutions. The matrix of weights W thus contains one extra weight vector not required to solve the problem. Starting from the definition of P(y=i|x), it can be shown that subtracting an arbitrary vector v from every weight vector w does not change the output of the model (left as an exercise for the reader, I’m first and foremost a teacher !).

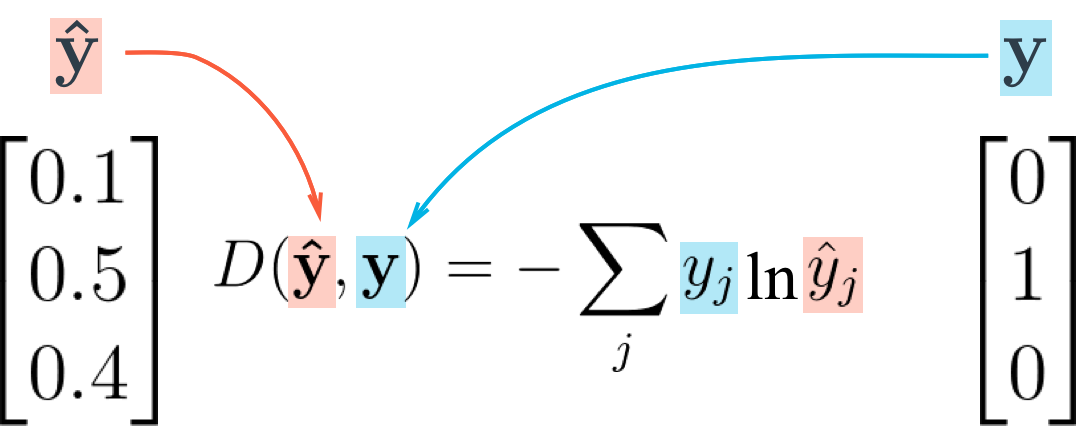

Categorical cross entropy how to#

Hence, it is symmetric positive semi-definite and our optimization problem is convex! Over-parameterizationīefore moving on with how to find the optimal solution and the code itself, let us emphasize a particular property of SoftMax regression: it is over-parameterized! Some of the model’s parameters are redundant.

From there, it is straightforward to show that its smallest eigenvalue is larger or equal to zero. Once again, it follows rather closely the derivation presented in this post for the logistic regression. Continuing this derivation once more would yield the Hessian of our problem.